Google Local Services Ads have been quite a headache lately, due, to the challenges faced with updating budgets. Its been an experience dealing with these issues.

Google Local Services Ads (LSA) which could revolutionize businesses by providing a platform, for expansion and visibility enhancement has its share of challenges that seem unbelievable for a company valued at $ 2 trillion.

An unsettling encounter, for newcomers.

For beginners, in LSA (Local Sensory Augmentation) setting up your account might feel like a challenge at times! Creating an account could turn into a bit of a headache as you find yourself repeatedly filling out forms only to see them reset when you thought you were done with the data entry marathon! I’ve faced this issue myself on occasions while using web browsers. After struggling through tries with popular browsers like Chrome and Firefox I eventually found success, with the Brave browser.

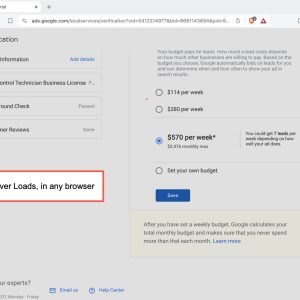

Struggles, with Keeping Budgets Updated

Despite managing to tackle the obstacles the problems didn’t end there; it was a real challenge to update the budget section, on the LSA platform—it seemed almost impossible! No matter which browser I tried—whether Brave or others—the system kept spinning without making any updates; this persisted as an issue. Made the platform nearly unusable.

Seeking Betterment

It’s surprising that a company big and capable as Google would launch a product, with basic issues like this one here in Google Local Services Ads program – it holds great promise, for small businesses but seems pretty shaky in its current form.

Google must tackle these problems away because a product, with potential shouldn’t be held back by technical issues that make it barely usable. If Google can fix these glitches and make LSA more reliable it could really become an asset, for businesses.. For now users are stuck dealing with a service that doesn’t meet their expectations.

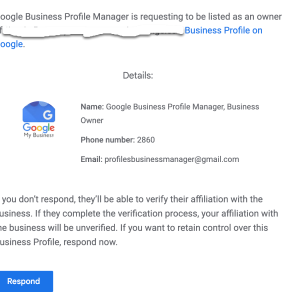

We’re seeing an alarming surge in attempts by spammers and bots trying to exploit Google’s Business Profile Manager. The common approach is to request ownership of your Google My Business (GMB) profile pages. The threat is real, and it’s widespread; we’re seeing a massive volume of these requests across all clients.

Here’s how it works: You receive an email request for ownership of your GMB page. The email may seem legitimate at first glance, but it’s a scheme. Once you accept the request, these unscrupulous actors can gain control of your business listing, which allows them to manipulate your business’s public-facing information, leading to a plethora of potential issues including misinformation, fraudulent reviews, or worse.

These emails can be cunningly crafted and persuasive. That’s why it’s absolutely crucial to be vigilant when dealing with ownership requests. An unexpected request should always raise a red flag.

Teamwork is the key to combating this threat. Good communication across all departments – be it management, IT, marketing, or staff – is vital. Make sure everyone is aware of this issue, and that they know what to do if they receive such a request.

Here are a few steps to prevent falling into this trap:

-Be skeptical of unsolicited ownership requests. Only grant ownership to trusted individuals who need it.

-Implement a protocol for dealing with GMB ownership requests.

-Regularly check the users list in your GMB dashboard to make sure all owners and managers are legitimate.

-In case of doubt, don’t hesitate to contact Google’s support team.

Security of your online assets should be a top priority. Please share this message to ensure we minimize the impact of these potential attacks.

Google Bard is a striking new addition to the world of search technologies, offering unprecedented opportunities for users to search and access content from diverse digital sources. With its revolutionary approach to search and access, Google Bard promises to revolutionize the way in which we find, access and consume content.

Through its cutting-edge technology and innovative search engine, Google Bard enables users to retrieve content and data from a wide range of digital sources – including websites, videos, audio, documents, and more. Furthermore, by leveraging powerful algorithmic techniques, Google Bard will allow users to access and refine search results in an entirely new way – delivering precise and precise search results.

The implications of this remarkable new search technology will be far-reaching; from cutting edge advancements in the field of data analysis and artificial intelligence, to the complete restructuring of how content and information is accessed and consumed – Google Bard has the potential to disrupt the entire search industry.

Google Bard has revolutionized how and what we search on the internet. It broadened the context of searching, being the first to utilize artificial intelligence to explore the web’s deepest depths.

Its impact is far beyond what it was once thought to be capable of; it outperforms traditional search engine results. Unveiling Google Bard is the focus of this article, and the pieces of what it encompasses and the impact it has had on search culture will be discussed.

From introducing machine learning algorithms to automated web content crawling, we have a clearer understanding of the now-ubiquitous search engine.

Google Bard is a search engine that makes use of natural language processing (NLP) for search queries. It allows users to search for information by asking a question in plain language, as opposed to standard keyword-based search queries.

Google Bard attempts to understand the intent behind the question, and then returns a list of results that provide an answer. Not only does it recognize individual words or phrases from a query, but it also understands the underlying relationship between those words or phrases in order to better understand the user’s intended query.

Through this approach, Google Bard tries to provide an improved search experience for casual users.

Google Bard is a free and open-source text editor for switching between programming languages. It allows users to rapidly switch between languages and make syntax corrections, including a comprehensive set of its own syntax rules, through its built-in auto-complete feature.

It is highly recommended for developers and allows users to code quickly and efficiently. The intuitive drag-and-drop editing feature makes it easy to build complex applications and explore new ways to code.

It is designed to work well with both source code and non-programming languages, allowing users to move quickly between the two. In addition, it runs on multiple platforms, making it an ideal choice for developers who need to develop for different systems.

Google Brain is a large-scale artificial intelligence research project using deep learning techniques developed by Google. It brings together researchers and engineers from various Google products to work on deep learning applications such as computer vision, language processing, and knowledge extraction.

The researchers utilize neural networks, a powerful data modeling tool based on the workings of the human brain, to teach computers to recognize patterns and solve problems. Through research, the team works to improve the quality of machine intelligence and capabilities of natural language processing.

Google Brain is used to teach computers to recognize objects in images, understand and respond to text-based conversations, and aid in research and development of self-learning machine learning models.

Google Bard is an innovative new tool that allows users to quickly search for facts, images, videos, and documents through the web. Utilizing the latest in natural-language processing, Google Bard enables users to accurately direct their search queries to the most relevant sources, thereby saving valuable time.

Additionally, Google Bard incorporates a “reverse search” feature – using only an image or photo as input – allowing for a comprehensive search of the web for similar images. In addition, Google Bard is extremely easy to use; it does not require any sophisticated understanding of computer programing or prior knowledge of the web.

Google Bard’s efficient, user-friendly interface, combined with the remarkable power of its search capabilities, make it an invaluable resource for customers seeking quick access to the most accurate information.

Google Bard is a new language translation app that allows users to communicate with a foreign language speaker through a microphone and patent-pending speech recognition technology. While this exciting new technology has numerous advantages, there are some potential challenges that can arise when using it.

One significant challenge is accuracy. There is still a chance for unrecognized words or misheard words due to sound quality or background noise.

Additionally, there is the potential for cultural misunderstandings when using translated sentences as native speakers may use language differently from region to region. Even though the app has been tested extensively, it is possible to encounter technical issues such as poor communication due to poor internet connections.

Lastly, there is an inherent limitation on the app due to the fact that not all foreign languages are available, posing a problem for those who need need to translate rarer languages. All in all, while Google Bard can be helpful in certain situations, potential users should be aware of some of the obstacles they may face.

Google Bard is a powerful tool for assessing the impact of search engine optimization. It helps marketers to better understand how their SEO efforts impact the visibility of their website by tracking the number of clicks, impressions and conversions generated by each keyword.

It provides insights into keyword performance across multiple channels including organic search and paid search. Additionally, it can help identify opportunities for optimization, identify patterns in user behavior, and inform strategic decisions about future changes.

By understanding the impact of SEO, businesses can make better decisions about how to increase their visibility and drive better results from their website.

Google Bard is a new technology that promises to revolutionize the way that people search for information online. It uses enhanced artificial intelligence (AI) to examine how people search for information and delivers more accurate search results.

Google Bard will be able to anticipate user searches, learn more about a particular query, and deliver more contextual information. Additionally, Google Bard will use neural networks to help better understand what the user is searching for even if the query is incomplete or unclear.

Google Bard is expected to provide more efficient and faster search results with fewer errors and more precise answers than in the past. Finally, Google Bard understands that different users have different needs and preferences, allowing for more personalized search results tailored to the individual.

As it continues to evolve, Google Bard will surely change the way that people search online.

Web Moves, a Internet Strategists, can help you understand how Google Bard will affect your search engine results. Google Bard is a new service which allows webmasters and content creators to quickly analyze and diagnose web performance.

It can help content creators to optimize their pages for better performance, which in turn can yield higher search engine rankings. With it, they can also track page loading times, troubleshoot slowdowns and ensure none of their structural coding has a significant impact on their website ranking in search engine results.

Ultimately, Google Bard can help content creators better manage their online presence, resulting in improved SEO and better overall search engine results.

Google Bard is a new ranking algorithm from Google that leverages natural language processing to better understand the intent behind web searchers and improve search results. Google is constantly striving to make its search engine as accurate and useful as possible, and Bard is designed to do just that.

With Bard, web searchers can rest assured that their search results will be more relevant to their query and ultimately provide them with the answers they are looking for. The launch of Google Bard is an exciting development that will help people make the most of their online searches.

Just about everyone wants a chance to rank highly on Google. After all, this is usually the easiest way to get in touch with potential customers and leads. However, reaching that goal can sometimes seem impossible, and consistently adding content to your site might not always be feasible.

Thankfully, Google offers a variety of tools to help boost your rankings that don’t involve writing another keyword-rich blog post. Below, we’ll discuss a few opportunities to consider that can help you achieve higher organic search results.

If your business is a local service or has a brick-and-mortar location, achieving a coveted spot in the Google Maps “three-pack” is a pretty big deal. This means that your contact information and website link will show up in the first three Google Maps spots when a person searches for a specific local solution. To increase your chances of securing this ranking, be sure to claim and optimize your Google My Business (GMB) listing, a public profile that displays important information about your organization.

Google Maps is one of the first things people see when they look for a specific business within their local area. If you aren’t listed, it makes it much more difficult for customers to find you. Furthermore, there’s a good chance that your competitors are already using this method, so you must follow suit to ensure your potential leads aren’t going straight to them.

Adding your organization to Google Maps is relatively simple. First, you’ll need to sign up for a Google My Business account. Then, you can add your company by searching it on the GMB website and choose whether you want your location to appear on Google Maps. After adding in the requested information, such as your business category, you’ll need to verify your identity to Google (either by mail, phone, or email).

Another option is called FAQ schema markup. This should be added anywhere there is a question and corresponding answer on your website. When someone searches that question, the special code allows for a portion of the answer to show up in search results. In short, this can be a valuable way to score added traffic.

One of the easiest ways to connect with your audience is to position yourself as a knowledgeable leader in your industry. Using these schema markups, you are making it easy for Google users to see that you have answers related to what they want to know. This puts you in a prime trust position when they’re ready to make a purchase or invest in your services.

To start using FAQ schema, you’ll first need to write and publish question-and-answer style content. You should format the text so that the question is in bold or uses a header tag. Then, you’ll need to insert the schema markup, which requires a bit of HTML knowledge. Here is the official explanation of how to do this from Google’s developer site.

Of course, it isn’t just Google Maps and FAQs that achieve the upper level in Google search results. Other snippets happen when content is deemed informative and valuable. While there isn’t a way to do this on your own, the easiest method is to continue to add engaging content regularly and keep track of what’s working well.

Again, this goes back to positioning yourself as a leader in your industry. Google uses snippets all the time, so it’s crucial that internet users see your content – not your competitors’. Therefore, you need to secure as many snippets as possible to maintain visibility on search engine results pages (SERPs).

There are a variety of strategies to optimize for certain Google snippets. For example, using H2 and H3 tags or highlighting top learning points in your text can really help. Again, this comes down to publishing high-quality content on a regular basis.

Another great tool to consider utilizing as a part of your Search Engine Optimization strategy is Google Discover. While there’s no way to control whether or not your website is featured, there are a few things you can do to increase your chances. First, make sure the content you create is centered around a specific key entity, which is the term Google uses to describe particular interest groups. Next, create blog posts and articles for your website that are generally time-sensitive. Google Discover only includes content on what’s happening now and for a short period of time.

More than ever, people are turning to the internet to stay connected with what is going on in the world. By looking up topics on Google Discover and publishing content on your website that could be featured, you’ll put yourself in front of a wider audience. In the end, this equates to higher traffic and more potential for leads.

Take a look at Google Discover to see where other businesses in your niche are featured or identify specific trends. Then, publish content on your website that includes these categories or topics.

With so many different ways to use Google to increase your organic search rankings, choosing the best strategies can be somewhat overwhelming. That’s why it’s important to work with a digital marketing partner you can trust. These experts can help you cut through the noise and determine which additional steps to take—or skip—to improve your overall ranking and increase traffic. Best of all, they usually have a whole list of other tips to get your entire marketing plan on the right path, which often means the difference between success and spinning your wheels.

Author Bio

Alyssa Anderson is the Content Manager at Zero Gravity Marketing (ZGM), a digital marketing agency in Madison, CT. ZGM is known for developing overarching marketing strategies and specializes in Pay-Per-Click (PPC), Search Engine Optimization (SEO), Content Marketing, Social Media, Development, Design, and eCommerce services.

As most of us (hopefully) know, one of the greatest problems facing our planet today is pollution. Tons of carbon emissions and immense trash heaps drown the environment, and this problem only increases with the population. We continue to create more and more waste, and fail to find an effective way to manage it.

But what does this have to do with Google search results?

Well, there is another kind of waste hiding beneath the headlines, impacting our daily lives: digital waste. This “digital waste” clouds up our search results, inhibiting us from finding the answers we need.

Google uses a variety of highly complex algorithms, with simple and often adorable code names (like Panda or Pigeon) to bring us results for each query we make. Googlebot constantly crawl public websites and add them to the system index. These sites are ranked on many factors to determine where they appear in the results page. The most important factors include technical aspects, popularity of the site, quality of the content, and age of the site. Google’s algorithms mostly produce accurate, relevant results from authoritative and trusted websites, and everyone’s happy. However, in specific types of searches this system fails, leaving us with stale results, feeling frustrated.

Stale results pop up when the older, authoritative sites are shown by Google, even when they are offering outdated, maybe even inaccurate information.

Let’s look at some examples.

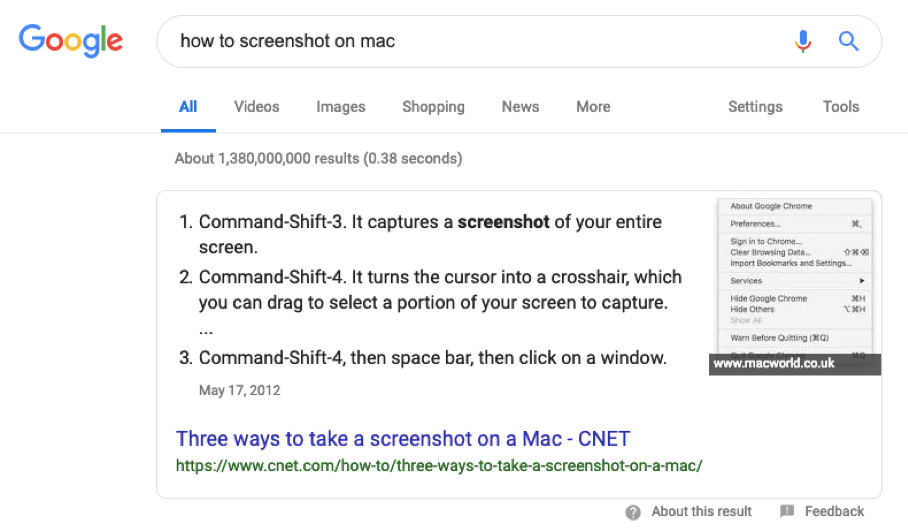

Here you would like to know the shortcut for taking a screenshot on your mac computer, so you search “how to screenshot on mac.”