As most of us (hopefully) know, one of the greatest problems facing our planet today is pollution. Tons of carbon emissions and immense trash heaps drown the environment, and this problem only increases with the population. We continue to create more and more waste, and fail to find an effective way to manage it.

But what does this have to do with Google search results?

Well, there is another kind of waste hiding beneath the headlines, impacting our daily lives: digital waste. This “digital waste” clouds up our search results, inhibiting us from finding the answers we need.

Google uses a variety of highly complex algorithms, with simple and often adorable code names (like Panda or Pigeon) to bring us results for each query we make. Googlebot constantly crawl public websites and add them to the system index. These sites are ranked on many factors to determine where they appear in the results page. The most important factors include technical aspects, popularity of the site, quality of the content, and age of the site. Google’s algorithms mostly produce accurate, relevant results from authoritative and trusted websites, and everyone’s happy. However, in specific types of searches this system fails, leaving us with stale results, feeling frustrated.

Stale results pop up when the older, authoritative sites are shown by Google, even when they are offering outdated, maybe even inaccurate information.

Let’s look at some examples.

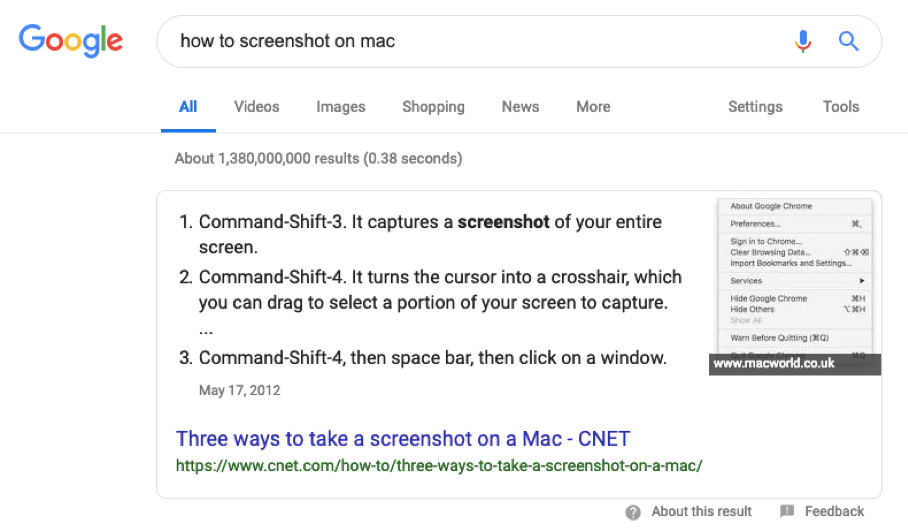

Here you would like to know the shortcut for taking a screenshot on your mac computer, so you search “how to screenshot on mac.”

UPDATE 10/19: Google has confirmed the rollout of Penguin 3.0

I’ve only got one anecdote to support my suspicion, but a client who was a victim of Negative SEO lost all of their top 10 rankings, and as of today they are all back 100%!

Backstory: This client hired us in December 2013 to figure out why their rankings and organic traffic had dropped overnight on Oct 5, 2013 (date of the last Penguin update). We analyzed their back links using a variety of tools and didn’t take us long to discover they had been a victim of negative SEO. We found over a period of 3 months there were random spammy links added to their site to the tune of about 20,000 links a month! They had never done their own link building nor hired an SEO. Other than these links they had a very small link profile, with just natural links and only a few.

Because the rankings and traffic did drop right in line with the Penguin update, and there were no warnings in Webmaster Tools of a manual penalty, we knew this was an algorithmic penalty associated with these links.

It took us about 3 months to discover and disavow these links, and the reason why is interesting. The links were not showing up on the page every time – they would only occasionally show up upon a refresh. Each refresh of these spam pages was filled with about 100 random links, and each refresh showed a new 100 links. So any link checker would find the links some times, but not other times. We had no choice but to continue to run a link checker, 3 different link checkers as a matter of fact, about 1x a week. Each time we would discover new links that we hadn’t disavowed yet. It was a brilliant sneaky black hat SEO attack.

By around February, we were confident we had disavowed about 90% of the spam links. But no recovery.

As we have all learned since then, a site must wait on Google to update the Penguin algorithm before any changes in rankings or recovery can take place. Unfair? Absolutely. Especially in the case of Negative SEO, which is real and does work. And in this case it took Google over a year to update Penguin. Imagine us reassuring this client that as soon as Google runs their update, their rankings will be back. “When will that be?” “Uh, Google won’t tell anyone, should be within 3 more months”.

In the meantime, we worked hard on content marketing, blogging for the client, getting high quality content published on their site and blog, building out Google Local pages, hoping that when the update hit, their site would be stronger than ever.

SUCCESS! ALL of their previous important keywords are back to the top 10, as of today October 18, 2014. Some of them even higher!

We are torn between being thrilled and being sickened that Google can allow something like this to happen.

SEO has evolved so much during the past 5 years that we even renamed our company to Web Moves. I absolutely love some of the brilliance I read from experts in our industry— and this guy could have been speaking words straight from my mouth.

Forbes – If You’re Good at These 3 Games, You’re Probably Good at SEO

Photo Credit: riograndegames.com

January 2013 – Last spring Google posted about Responsive Web Design on their official webmaster central blog and though the flavor of their article was fairly mild, they made it very clear that their “commitment to accessibility” includes a very important message to web designers – “Mark up one set of content, making it viewable on any device.”

Aaron Wall of SEOBook recently predicted that, in 2013, SEOs who “remain overly-public will continue to invent language to serve their own commercial purposes while chastising those who do not fall in line.” I appear to be living up to (the first part) of that promise because I’m calling it: the breakthrough ranking factor of 2013 will be “waves,” a term I just made up.

Aaron Wall of SEOBook recently predicted that, in 2013, SEOs who “remain overly-public will continue to invent language to serve their own commercial purposes while chastising those who do not fall in line.” I appear to be living up to (the first part) of that promise because I’m calling it: the breakthrough ranking factor of 2013 will be “waves,” a term I just made up.

This will be a somewhat speculative post, so I feel compelled to say that these opinions are my own, and don’t necessarily reflect the opinions of Northcutt as a whole.

Where did this crazy idea come from? It started with the realization that, back in 2009, Google’s Chief Economist told McKinsey Quarterly “I keep saying the sexy job in the next ten years will be statisticians. People think I’m joking, but who would’ve guessed that computer engineers would’ve been the sexy job of the 1990s?”

It’s pretty much common knowledge: Web developers hate SEO experts. In all fairness, however, the feeling is mutual. But there are some good reasons for this culture clash.

It’s pretty much common knowledge: Web developers hate SEO experts. In all fairness, however, the feeling is mutual. But there are some good reasons for this culture clash.

“Same Thing” Sickness

One thing that SEO’s hate about web developers has to do with the way they execute or fail to carry out a very specific request.

A case in point: An SEO requests a developer to create a 301 redirect between pages. The developer does a meta-redirect or a 302 redirect citing that it’s the “same thing”.

From the developer’s perspective, it’s the same thing for the user, but from an SEO standpoint, it affects the search engine rankings.

The Death of Optimization

Developer skills and SEO techniques go hand in hand, so when if a developer fails to do their job, then it doesn’t matter what the SEO team does. Even with a copy of Google’s secret algorithm in hand, the site won’t rank if the site won’t work.

A case in point: A client implements some redesign elements. Suddenly, traffic drops by 30%.

The problem: Many of the pages don’t load like they should and the ones that do load show 500 server errors. The developer failed to spot the errors during the development process.

The result: 3 weeks of seriously diminished traffic.

The “I Know SEO” Syndrome

This is a contagious disease that developers get that can quickly spread to other developers. If you have ever heard a developer say something like I’m pretty good at SEO, it can usually be translated into I’ve read a little about SEO and therefore I pretty much know more than you do.

But wait a minute, SEO’s. You aren’t immune, either. There is a related syndrome called “I can code”.

A case in point: An SEO expert successfully builds a Word Press site and suddenly deems themselves a web developer.

The Real Problem

At the root of the culture clash between coders and SEO’s are their driving philosophies. Business classes that teach search engine optimization focus on uniqueness. After all, differentiating yourself from the competition is a good thing. On the other hand, computer science classes center on making everything the same. Each discipline takes a different approach to reaching the same result: stability and efficiency.

I wanted to publicly thank Bill Slawski from SEO by the Sea I recently hooked up with Bill in a Google Hang out which was hosted by and old mate of mine Dejan from DejanSEO. After listening to him talk about various SEO related topics, I knew I wanted to hear more. I fired off an email offering to take him out to lunch and he responded promptly (maybe he was just hungry?), we sorted out a day and time for me to come down to meet with him.

I wanted to publicly thank Bill Slawski from SEO by the Sea I recently hooked up with Bill in a Google Hang out which was hosted by and old mate of mine Dejan from DejanSEO. After listening to him talk about various SEO related topics, I knew I wanted to hear more. I fired off an email offering to take him out to lunch and he responded promptly (maybe he was just hungry?), we sorted out a day and time for me to come down to meet with him.

It was a pleasure to chat with an industry veteran and share some war stories. I am looking forward to learning from and working with Bill in the future. If you have the opportunity to listen to Bill speak, listen very carefully and take notes :-).

All this discussion about “Negaitve SEO” reminded me about a blog post that I wrote in 2008 on the same topic:

I have been reading and studying the term negative search engine optimization (SEO). I find it quite funny how people at Google in particular (Matt Cutts) says Google Bombing or negative SEO is difficult or nearly impossible without high jacking someone’s website. This is totally wrong, but then again he is the same guy saying you need links to get your site to rank, but don’t buy them… (If I hear that loaded answer one more time!).

Google is very smart. What they do is rely on a human being’s general perception that if something is deemed wrong or is generally unacceptable, that most will avoid it. They have been pounding down everyone’s throat that paid links are bad, if you buy links your a bad person or evil webmaster. They talk about penalties and banning websites. In the past penalties were very rare, but recently I have seen several link buyers penalized.

As soon as I see a client penalized, I see the clear path to negative SEO. Each and every time I see or hear about a website penalized for off site SEO work, I create a log of what transpired and what the effects were. Does Google think SEO’s or people involved in search engine optimization are dumb? Well we are not. I have a list of places where links are penalized, I have a list of the types and quantities of links that have been penalized, I know how long it takes and how to avoid it or how to cause it.

I am not talking about buying links for Viagra for a number one ranked website for the search term homes. But you know if the site that ranked #1for homes, ranked between 4-6 for home sales, sell homes, buy homes etc., and that these are the keywords that a top quality website can be penalized for, then you simply build just a few too many really good links. Do not be obvious, do 100% legit SEO (buying links is OK in my book) work for the target site, just do a little too much, a little to quick, and guess what they will be given a -30 or a -45, for several of the over optimized keywords. They will not be penalized for their primary keyword which would require a 100% hand removal from Google or a Hand Penalty from Google, but it is not difficult to get them hammered for their 2nd tier search words.

Needless to say, I can present Google with multiple instances of proof that this is a fact, and I can certainly repeat the results if required.

Google is crossing its fingers that the general SEO community will frown on the part of negative SEO, because of the bad Karma, but in reality what is the difference between getting your site to rank higher (therefore lowering your competitors site) or simply lowering your competitors site so your site ranks higher?

Google is really beginning to upset me with these link threats, and link penalties. If they continue this barrage on SEO companies, everyone will just do very low profile SEO for their client’s sites, and over optimize 3-4 of their competitor’s websites. When multiple SEO companies adopt this theory, Google is really screwed. They will lose nearly all control, and be left unable to properly address which sites should be rewarded and which should be penalized.

For now Google is OK, because of the bad Karma factors and the “it’s wrong to do this”, but it will only take Google penalizing 2-3 top clients before SEO companies will all decided it is so much easier, and more profitable to negative SEO their competitors.

I will say that it is NOT hard, it is NOT difficult. If a group of top SEO and link builders got together, they could probably get nearly any site penalized.”

The marketing has always been about customer’s psychology – learning the specific needs of a potential client and trying to satisfy them. Google has demonstrated once again that targeting certain audience is mandatory. And if you are into SEO, you should be aware of it.

According to one recently published study, Google Search results on a smartphone will vary by over 80% from those produced by a desktop computer query. If you think about it – this is actually quite logical to have a slightly different search algorithm for smartphones. For example, smartphone users like downloading various applications. Thus the mobile Google Search presents many results that include the word “app” or “download”. Brand filters and store filters cannot be applied to mobile Google search and it is even more biased towards “local” domains, with Google places usually appearing higher in the vertical results list.

With the smartphone market growing quickly, it seems as only a matter of time when “SEO for mobile” will become a separate branch in the industry.

Google has recently announced that Google Instant Mobile is now available “globally”. This means that the tool is released for all countries that have Google Mobile access (there are several dozens of those) and supports almost thirty languages.

The product, similarly to Google Instant Mobile, is integrated into search features for any Android browser (built-in for Android OS 2.2 and up) , and features various algorithms that allow faster dynamic search results.

Although this release was expected (shortly after releasing Google Instant Mobile in English, the company had announced that international support is on its way) – nobody anticipated that this would happen so quickly. The roll-out took Google slightly over one month time – an incredible figure, considering the complexity of the product. Of course, this simply means that Google had been working on globalization of Google Instant Mobile simultaneously with the product itself. That is no wonder – Google had always emphasized the importance of international marketing and global support.